Solutions

Platform

It’s easy to get confused when approaching AI visibility, whether it’s your first time or you’ve already spent months on it. Often, AEO talk sounds like this: “Optimize for LLMs. Be present in AI search. Adapt your SEO strategy.” But when you ask a simple question — how exactly? — the answers get vague very quickly: track mentions, create content, be authoritative.

That’s not wrong. It’s just not enough.

Most teams approach AI visibility the same way they approached SEO: track what shows up, compare competitors, create more content, and wait for results. But LLMs don’t work like search engines. They don’t rank pages in a list. They generate answers. And to do that, they rely on something fundamentally different: mathematical representations of meaning. Not keywords. Not links. Not just authority. Meaning.

At a high level, LLMs convert text into vectors — numerical representations of concepts. This is what allows them to treat synonyms as similar, understand relationships between ideas, and group content by meaning rather than wording. So when a user asks a question, the model is not “searching for keywords.” It is trying to find the most semantically relevant concepts to construct an answer. That has a very important implication: your brand does not appear because you “rank.” Your brand appears because the model considers it a relevant concept.

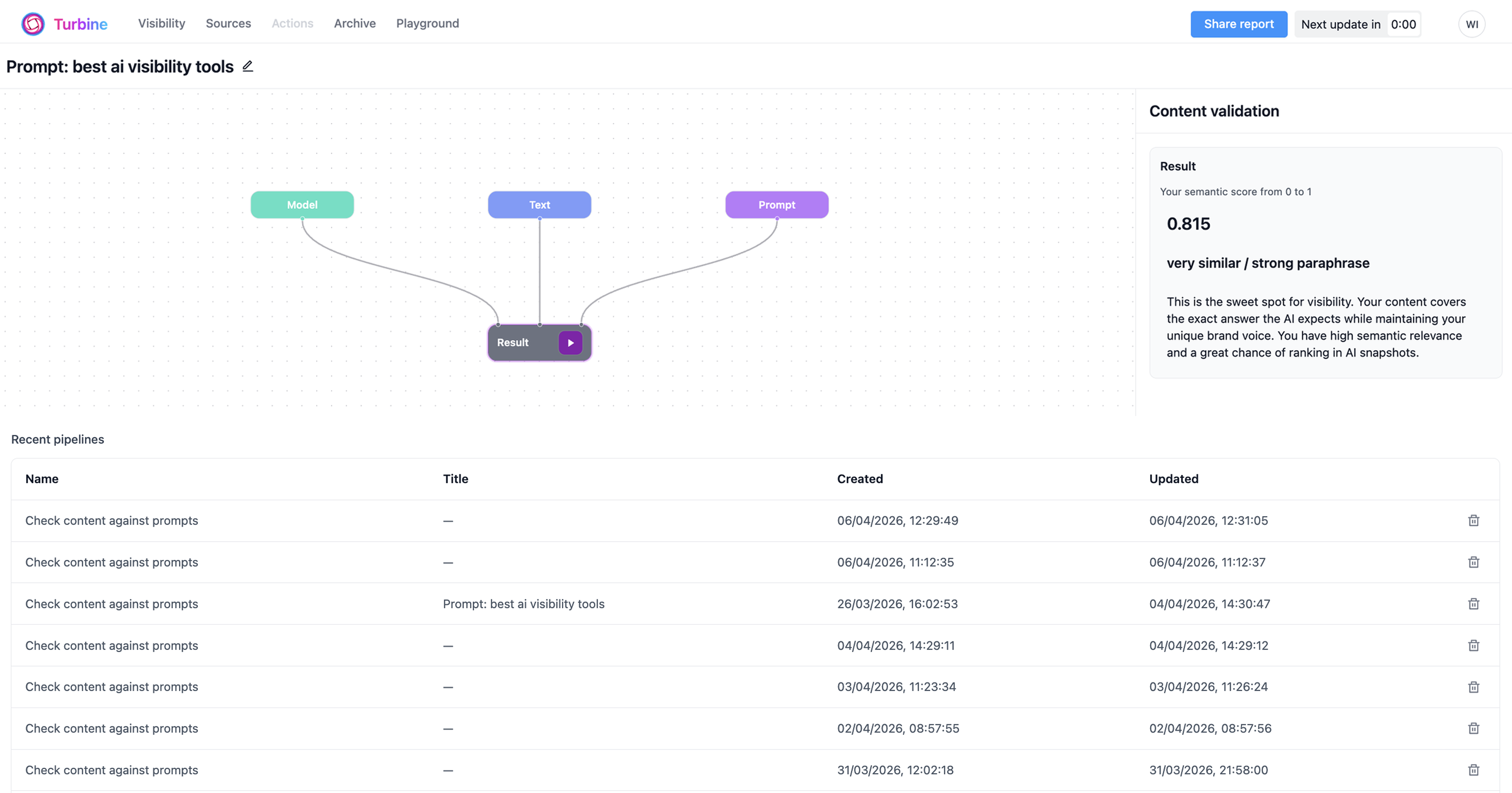

Most tools in the space today focus on tracking: are you mentioned, where do you rank in responses, and which sources are cited? That’s useful. But it answers only one question: what happened? It does not answer why it happened, why it didn’t happen, or what you should change to influence it. Without that layer, teams are left guessing. That’s why AI visibility feels unstable. Not because it’s random, but because the mechanism is not being measured.

This is where the framing needs to change. AI visibility is not just a marketing problem. It is a data science problem applied to marketing. Because once you accept that models operate in vector space, a few things become possible.

Not just missing pages, but missing concepts. Monitor your niche for more than a week, identify the key players as LLMs see them, and plot them on a map: what concepts they own, and where you are in that space. Then investigate further. You might cover a topic broadly, but still lack the specific signals the model associates with it. That is why some brands never appear, even when they “should.”

This is another important shift. Instead of relying only on traditional keyword logic, you can look at the semantic entities and concepts the model connects to a topic. That gives you a much more useful foundation for content creation.

Instead of asking, “Do we have content on this topic?”, you can ask, “How close is our content to what the model considers a good answer?” And once you have a draft based on the semantic entities you’ve retrieved, the workflow changes. Instead of publish → wait → observe, you can do draft → evaluate → improve → publish. You are no longer just guessing whether something might work. You can estimate whether it is likely to.

Let’s be clear: AI visibility will never be fully predictable. There are things you cannot control, such as how users phrase prompts, how models rewrite queries internally, how different models weight sources, and the randomness and variability in outputs. But that doesn’t mean everything is unpredictable. It means part of the system is measurable, and part of it isn’t. And right now, most teams are not measuring the part that can be measured.

Search is shifting. Users are moving from “find me links” to “give me an answer.” That changes the role of a brand. You are no longer competing for a position on a page. You are competing to be considered, included, and cited. That requires a different approach. Not just better content, but better alignment with how models interpret that content.

AI visibility feels confusing because it is often treated as a black box. But it is not entirely a black box. It is a system built on math. And when you approach it that way, you stop guessing, you start measuring, and you get a path to improvement that is actually repeatable. The teams that win in AI search will not be the ones publishing the most content. They will be the ones who understand how models decide what belongs in an answer — and act on that understanding.